Since the lockdown started we have heard this statement quite a few times. The “Skype” here refers to Skype for Business on-prem server edition. Organisations who currently have a deployment of on-prem Skype for Business environment started to notice that Microsoft Teams deliver better meeting quality now the majority of their workforce work from home. As a result, more and more businesses have sped up their transition to Teams by putting in hybrid configuration and enabling “Skype for Business with Teams collaboration and meetings” to start having meetings in Teams while peer-to-peer and PSTN communication remain in Skype.

However, thinking about this, those organisations have had Skype on-prem for years and have all been “happily married”. What exactly happened that made Skype on-prem meeting experience fell from grace?

The key here is “work from home”.

To illustrate this clearly, we have to get a bit networky. I have prepared some diagrams to make things a bit visual and hopefully easier to take in.

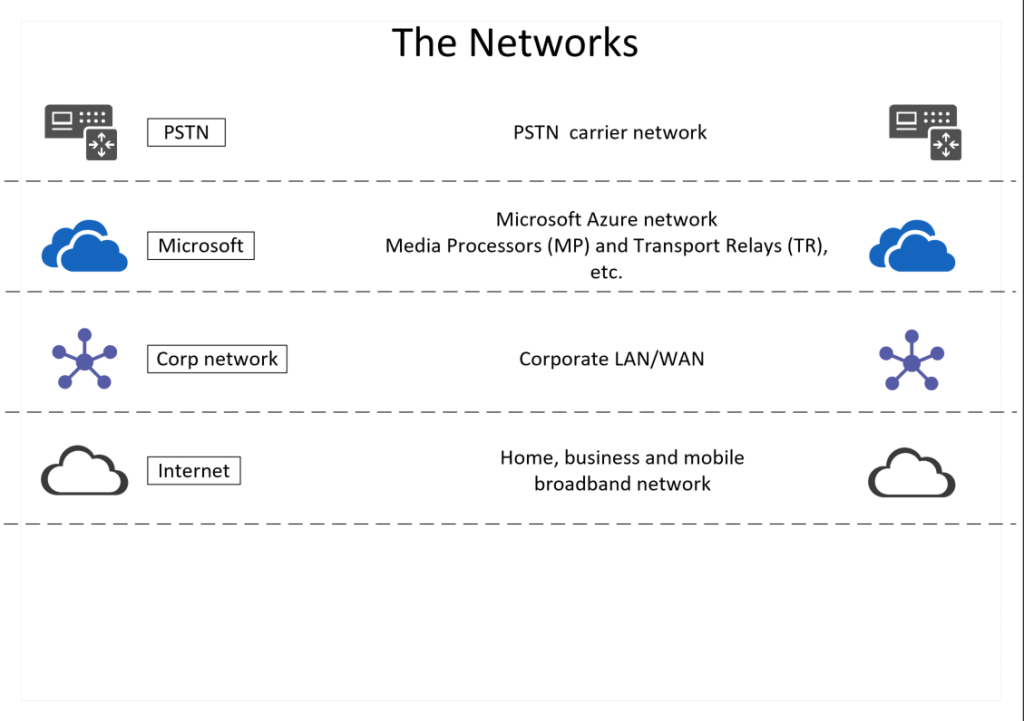

First of all, there are a few networks that we’ll be talking about. They operate in parallel to each other and each serve a different purpose.

Because we are talking about conferencing specifically today, let’s zoom in on that.

In conferencing, there is this thing called MCU – Multipoint Control Unit. MCU isn’t a Skype thing or a Teams thing. It’s a conferencing terminology or concept. It is the element of the system that mix all the audio or video feeds together, merging individual streams into a group conversation and therefore produce a conference. So, although products like Skype for Business and Teams are the new shiny UC platforms, they still need the MCU or equivalent for conferencing. And the quality of the meeting experience largely depends on if the user endpoints can talk to the MCU in the most efficient way.

Let’s take a look at the MCU in Skype on-prem. Typically, the MCU is located on the Skype for Business Front End server or pool. Before the COVID-19 lockdown, the majority of the workforce were office based. That means when people were joining Skype meetings most of the attendees, if not all, were “on-net” with the Skype for Business server. The traffic could traverse from the users to the MCU via the controlled and optimised corporate LAN/WAN network, and in turn deliver a good quality and experience for all.

Now, let’s send everyone to work from home. All of sudden, you are no longer “on-net” with the Skype for Business servers. You are off the net, the corp net. Skype on-prem rely on its Edge role to accommodate remote users. The Skype for Business Edge server sits next to the Front End server “in the office” but facing the internet, and act as the Media Relay between the users at home and the Front End server “in the office”.

The traffic from all attendees will now have to traverse the uncontrolled internet to get to the MCU “in the office”, or more realistically the datacentre. Some people may live closer to the datacentre, some may be further away. Some people may have a good home broadband and all to themselves, some may have kids streaming and gaming while they are trying to join a meeting. Some ISPs may take 5 hops to reach your company’s datacentre, some may take 15. What this means is that there is a much higher chance for your Skype for Business data traffic to suffer from high latency (people end up talking over each other), high packet loss (robotic voice), and/or high jitter (missing words or syllables in speech).

What about Teams then?

Teams is a cloud based platform, so everything is over the internet. How can that be better? One thing Microsoft keep saying when it comes to Teams is that “Teams is designed from the ground up to work over the internet”. But how?

Behind the Teams client that we see and use everyday, is Microsoft’s biggest gun: the Azure network.

The Azure network is huge and powerful. It’s the 2nd biggest network in the world, just behind the internet. There are private dark fibres between all the datacentres that Microsoft own, and with peering with a long list of ISPs globally. It has WAN links with speeds up to 172Tbps and has POPs (Points of Presence) strategically placed around Microsoft datacentre regions to help bring the traffic back in, to bring the datacentre closer to the customers.

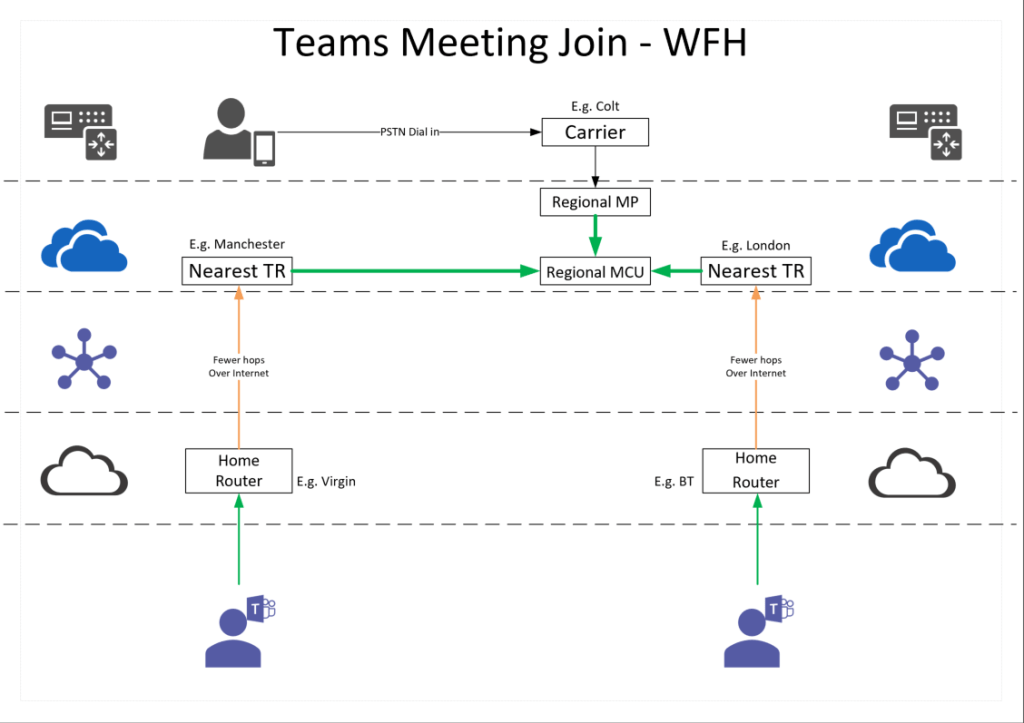

Specifically to Teams, there are Transport Relays spread all over the world in the Azure network. They are then connected to a mesh of Media Processors and Conference MCUs in regional main datacentres in North America, Europe, APAC and Japan.

When a Teams client tries to join a meeting, or a call, the first thing it does is to discover and locate a Microsoft Transport Relay that is the “closest” to the user endpoint. This could be geographic distance or the speed of response. Then the peering between ISPs and Microsoft means it’ll take fewer hops for the traffic to get to Microsoft. Once there, it’d be on the Microsoft highway to get to the MCU in the Azure network.

In comparison to Skype on-prem, although the traffic is still going over the internet the user endpoints don’t go across the big bad internet without any special treatment (peering) to get to a fixed location. Instead, they only need to get to their “nearest” Microsoft Transport Relay to get on the Microsoft highway, and relax. The network traffic to the MCU is much more efficient. And, therefore, deliver a better meeting quality in Teams.

There are so many other topics we could expand from here: Teams media path, codecs, quality of experience, corp network design, etc. etc. We’ll get to them in future posts.

Finally, before I let you go, I will leave you with a little tip – my personal favourite Teams meeting trick: Take the meeting with you on your mobile. (Although the voice quality won’t be the same even if you use the same certified headset. Want to know why? Read my other post here)

I hope I have been helpful. Thank you for reading!

Reference:

https://marckean.com/2018/11/13/microsoft-azure-has-the-best-global-wan/

https://tomtalks.blog/2019/06/where-in-the-world-will-my-microsoft-teams-meeting-by-hosted/

https://www.djeek.com/2018/01/microsoft-teams-and-the-protocols-it-uses-opus-and-mnp24/

https://docs.microsoft.com/en-us/azure/networking/microsoft-global-network

Leave a comment